Open: An Autobiography

Andre Agassi, Erik Davies, et al.

4.7 on Amazon

139 HN comments

Starting Strength: Basic Barbell Training, 3rd edition

Mark Rippetoe and Jason Kelly

4.8 on Amazon

121 HN comments

Born to Run

Christopher McDougall

4.7 on Amazon

82 HN comments

Moby Dick: or, the White Whale

Herman Melville

4.3 on Amazon

75 HN comments

The Inner Game of Tennis: The Classic Guide to the Mental Side of Peak Performance

W. Timothy Gallwey , Zach Kleiman, et al.

4.7 on Amazon

74 HN comments

The Book of Why: The New Science of Cause and Effect

Judea Pearl and Dana Mackenzie

4.4 on Amazon

56 HN comments

The Anarchist Cookbook

William Powell

4.3 on Amazon

56 HN comments

Shoe Dog: A Memoir by the Creator of Nike

Phil Knight, Norbert Leo Butz, et al.

4.8 on Amazon

55 HN comments

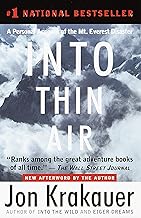

Into Thin Air: A Personal Account of the Mt. Everest Disaster

Jon Krakauer , Randy Rackliff, et al.

4.7 on Amazon

55 HN comments

Deep: Freediving, Renegade Science, and What the Ocean Tells Us About Ourselves

James Nestor

4.7 on Amazon

51 HN comments

The Art of Learning: An Inner Journey to Optimal Performance

Josh Waitzkin and Tim Ferriss

4.4 on Amazon

48 HN comments

K: A History of Baseball in Ten Pitches

Tyler Kepner

4.6 on Amazon

46 HN comments

The Talent Code: Greatness Isn't Born. It's Grown. Here's How.

Daniel Coyle, John Farrell, et al.

4.7 on Amazon

37 HN comments

Moneyball: The Art of Winning an Unfair Game

Michael Lewis

4.7 on Amazon

37 HN comments

The Old Man and the Sea

Ernest Hemingway, Donald Sutherland, et al.

4.6 on Amazon

26 HN comments

mikaelphionApr 17, 2020

agbellonFeb 24, 2021

- Deep Questions With Cal Newport

- CoRecursive - Coding Stories (This is my own podcast, so not a listener so much as a creator)

enkiv2onAug 5, 2016

sabertoothedonJan 13, 2021

I am not affiliated with the great work linked here - but I have been working on the general topic as a side project for a few years.

bnchrchonSep 13, 2018

Deep Work gave me some good insight on how to get the most out of my days.

Sapiens vastly widened and shifted my understanding of the myths that make up our society.

yoquanonAug 16, 2018

Just a piece of more context: the 1st author wrote about connection between physics's Renormalization Group and Deep learning which was featured in [1] 4 years ago.

[1]: https://www.quantamagazine.org/deep-learning-relies-on-renor...

dragandjonAug 23, 2019

Based on Uncomplicate open source libraries https://github.com/uncomplicate

hrant25onDec 29, 2016

batbombonAug 22, 2014

http://deepdive.stanford.edu/

bitLonAug 21, 2018

firasdonJuly 5, 2017

whiskers08xmtonMar 14, 2019

If you can do 4 hours of productive work, truly, no distraction, deep productive work, then you're likely way ahead of your peers.

Leave the rest of your time for shallower work, which doesn't require intensity of focus, and make sure to spend some time re-charging, where you focus on not doing work or being connected at all.

jmuganonJan 13, 2016

kkaranthonOct 12, 2019

* Deep by James Nestor: A look at the extreme sport of freediving, where contestants train to submerge to depths much greater than 400ft without any oxygen and pressure equalizing equipment

* The Idea Factory: Bell Labs and the Great Age of American Innovation by John Gertner: A great book about the history of Bell Labs, the scientists and engineers that brought great innovations to society including phones

* Wizard: The Life and Times of Nikola Tesla by Marc Seifer: A biography of Nikola Tesla. Its quite astounding how much one person can achieve in their lifetime

a-salehonJuly 13, 2018

1. So good they can't ignore you

2. Deep work

3. Mythical man-month

4. Power of habit

Now I am reading Tools of titans by Tim Ferris. I never thought I would get into the genre of self-improvement books, but it seems I like these :-) Even though I am conscious about the fact, that I am applying maybe 10% of the books advice :P

Another thing I am reading is Math from three to seven [1], mostly because I would like to discuss math with my 4yo daughter one day, preferably sooner rather than later, because I find math discussions immensely fun :-D Maybe I will even start a math-circle :-)

[1] http://www.msri.org/people/staff/levy/files/MCL/Zvonkin.pdf

madrafionMay 7, 2017

This is mostly ML if you would like to dive into deep learning I think fast.ai is the best course for anyone with programming experience and you can also use the deeplearning.net Tutorial as a side reference.

If you have a practical experience and would love to understand the theory behinds it then Deep Learning Book is the Bible.

MrQuincleonMay 15, 2020

1. Go beyond what people tell you. Discover your own truth.

2. The love for tinkering.

3. The idea that what you bought is your own and you can do anything with it.

4. To use something in a way that is completely not how it is intended.

5. Deep reverse engineering dives.

6. The guilty pleasure of picking digital locks.

I think I've books in all those directions.

bit2maskonJuly 21, 2015

There are also another great resources online, like those I list below:

1.) In-depth introduction to machine learning in 15 hours of expert videos[2]

2.) Deep Learning Tutorial (@ ufldl.Stanford.edu/tutorial/, can't post the link because I'm out of mana, I mean, not enough reputation yet)

[1]: https://github.com/josephmisiti/awesome-machine-learning

[2]: https://www.dataschool.io/15-hours-of-expert-machine-learnin...

rayalezonSep 6, 2017

It's not even necessary to copy anyone's voice, as long as there's a selection of the most comprehensible and human-sounding ones.

Then, you could even automatically generate slideshow presentation from a few illustrations and headlines, and that would make "rendering" articles into videos very fast and easy. I'm sure a lot of people would pay for such service.

----

By the way, recently I've encountered Deep Voice 2, a similar research project by baidu:

http://research.baidu.com/deep-voice-2-multi-speaker-neural-...

Results are very impressive.

_zskdonNov 20, 2018

>> You sound like you have anxiety problems. What have you done to address your anti-social tendencies? Are you going to a therapist? Do you expect a fairy to fly into your house and magic them away? What job do you think exists where you don't need these skills?

>> Having a therapist does not mean you are crazy, and you don't NEED to be crazy to have one. It means you have having a neutral person who helps you track and set goals, track your moods, and help you process work relationships and events. Michael Jordan has a coach, brain workers have therapists. ( https://news.ycombinator.com/item?id=18277170 )

One thing I want to make clear is that this is not going to go away without actual effort and planning on your part.

I would recommend going to a therapist and having them help you process your social interactions and set goals for improving yourself. Which, overall, is what a therapist does. Way more than the cliche "Now let's talk about your father..."

A lot of good information in here, as well. Read some books, it's good for you! It makes you smarter! People have taken time to write them for the last thousand years for a reason!

You can spare the time away from social media to read a book, I promise. And the sense of achievement you get from finishing a book feels great.

- "How to Win Friends and Influence People" is a must-read.

- "Getting to Yes" is another excellent book about workplace conflict resolution.

- There are a ton of books about emotional intelligence. Find one that sounds interesting to you and read it.

I'll also recommend "Deep Work" and "Smarter, Faster, Better" for more general workplace productivity management, but feel free to sleep on those if you feel like it.

etempletononDec 5, 2020

Are there more distractions now? Certainly, but it is an elitist view to act as if this impacts the amount of deep reading your average citizen of any given country partakes in. If anything, the proliferation of smart phones will increase base level literacy among the masses globally allowing more individuals from more diverse backgrounds the opportunity to one day have the opportunity to engage in deep literacy.

What we are seeing now is more people engaging in conversations and debates that were previously relegated to the elites—academics, politicians, aristocrats. Are their opinions impacted by their lack of deep literacy? Certainly, but their opinions always have been, their voices just weren’t heard quite so loudly before.

saasideasonDec 1, 2020

Find more about the most profitable and fast-growing SaaS startups, niches where you can compete & win.

KNOW MORE BEFORE YOU START THE NEXT BUSINESS OPPORTUNITY:

We Do The Tedious Work:

We analyze hundreds of companies for you and send only the best ones directly to your inbox. Quick-start your next business opportunity in any market.

Deep Analysis:

This is not just a simple business ideas publication. it's a research-backed market analysis. Everything is fact-checked and verified before we share it with you.

Get All Newsletter Archive:

Any new reader gets not just the latest research and analysis, they also get instant access to all previous publications for even more business opportunities.

4 MORE REASONS WHY YOU SHOULD SUBSCRIBE TO THIS NEWSLETTER:

1- We check that the core of the product can be replicated or improved with reasonable effort.

2- We identify a market that has been proven by companies that have raised serious VC money.

3- We identify opportunities for a down-market niche, determine their UBP (unique buying proposition).

4- We sum it all up for you - giving you the market, niche, UBP analysis, down-market opportunity, and costs.

Thank You

simongrayonOct 18, 2018

Deep Work is more of a how-to book - about how to get ahead in world that's losing its ability to focus (due to the stuff described in The Shallows).

eggie5onNov 29, 2018

* Item-Item CF (workhorse amazon original from 2003 w/ modern enhancements)

* Deep Pooling Models (à la Covington. YouTube Deep Rec.)

Although I'm a bit perplexed as to the difference between Deep-FM Recipe

and the FFNN Recipe as it describes the same thing...

https://docs.aws.amazon.com/personalize/latest/dg/working-wi...

jph00onJune 10, 2019

If you're interested in diving deeper into the papers and math behind the scenes, as well as coding from the lowest levels (right down to the compiler level), you'll be interested in "Deep Learning from the Foundations", which is coming out in 2 weeks. The last two lessons are co-taught with Chris Lattner (creator of Swift, LLVM, and Clang).

If you want to understand the underlying linear algebra implementation details, have a look at "Computational Linear Algebra for Coders": https://github.com/fastai/numerical-linear-algebra

If you want to learn about decision trees, random forests, linear regression, validation sets, etc, try "Introduction to Machine Learning for Coders": https://course18.fast.ai/ml

(All are free and have no ads. They are provided as a service to the community.)

Let me know if you have any questions!

arthurcolleonJan 20, 2021

Places to Avoid, list written + maintained by Arthur Collé:

[] Deep space [added: after seeing Gravity]

[] Mariana Trench [added: after seeing Underwater]

[] Amazon rainforest [NEW, added: 2021-01-20]

estebankonMar 8, 2013

> * Fully interactive virtual world - If it looks usable, it should do something

> * Deep original fiction - Ethical parables, cultural histories, fully developed alternate language text

This reminds me of the first Deus Ex. Wonder if they'll be able to pull it off.

FiberBundleonJune 5, 2019

You can also use Deep Learning for this. There are some interesting approaches using Reinforcement Learning [2]

[1] https://www.aclweb.org/anthology/P18-1034

[2] https://arxiv.org/pdf/1709.00103.pdf

IsinloronAug 23, 2020

But GPT-3 is able to do few-shots learning. If you show it couple of times how to do something it will try to repeat after you showing some rudimentary understanding of what you are trying to do. It's even able to learn to do simple analogies [0].

GPT-3 is able to do very, very simple arthritics like addition and subtractions with 2-3 numbers. Actually even a lot smaller models trained on arthritics are able to do impressive feats like symbolic integration and solving differential equations.

> Deep Learning for Symbolic Mathematics [1]: Neural networks have a reputation for being better at solving statistical or approximate problems than at performing calculations or working with symbolic data. In this paper, we show that they can be surprisingly good at more elaborated tasks in mathematics, such as symbolic integration and solving differential equations. We propose a syntax for representing mathematical problems, and methods for generating large datasets that can be used to train sequence-to-sequence models. We achieve results that outperform commercial Computer Algebra Systems such as Matlab or Mathematica.

What is true is that GPT-3 is not very good at following instructions. It would not be able to execute an algorithm. So, it would not learn arithmetic from instructions if you would not include explicit examples.

[0] https://medium.com/@melaniemitchell.me/can-gpt-3-make-analog...

[1] https://arxiv.org/abs/1912.01412

arstinonJan 8, 2018

https://examine.com/nutrition/is-saturated-fat-bad-for-me/

You can even find people who are into various forms "traditional foods" (not diet trends like paleo...though maybe that too?) go further than more cautious articles like this in affirming -benefits- of saturated fat. Catherine Shanahan for example argues in Deep Nutrition that saturated fat can be good.

anjconSep 18, 2020

Covington, Paul, Jay Adams, and Emre Sargin. "Deep neural networks for youtube recommendations." Proceedings of the 10th ACM conference on recommender systems. 2016.

Nvidia furthermore implemented/released it for TensorFlow, and for their new Recsys engine:

https://ngc.nvidia.com/catalog/resources/nvidia:wideanddeep_...

etatobyonAug 25, 2019

2. Powershell (on Linux.) I'm already proficient with the traditional shells, but I want to see if this is overall a better CLI and/or scripting environment than, say, Fish. (This was prompted by the recent post about a similar thing newly implemented in Rust.)

3. APL, getting back on it after a long time and potentially writing my own compiler and dialect, if I feel inspired.

4. Consolidating Japanese, mostly the spoken language, because I don't have the time nor will to memorize 1000s of characters. I'm already intermediate level, so at this point I mainly watch movies / shows every day, hoping some of the vocab will stick to my mind.

andreykonMay 28, 2017

They basically took the same idea and instead of just producing a hack finished a nice full version of the idea - very nice write up!

But, they don't have any web UI for it yet, so I am getting tempted to revive my dormant side project and likewise make a proper polished version of it with a web interface. If anyone on here is a talented web dev with an interest on working on this as a side project (for free, and for fun, though we could explore monetization if it works), feel free to get in touch! (ps extra get in touch if you are near south bay area/Stanford)

PS Incidentally, I independently came up with the same idea as in Deep Interactive Object Segmentation (https://arxiv.org/abs/1603.04042) and implemented it for my Stanford CS 229 (Machine Learning) project first - the hack came later. The ObjectCropBot hack allows only cropping and not clicking because it was faster to hack in that, but I think it is ideal to allow both cropping to constrict around target object and clicking (clicking alone leaves too much ambiguity , eg do you want to crop the person, or their shirt, or a subset of that shirt).

DimitrisonAug 13, 2018

I would also recommend going through the scikit-learn documentation. Some of the tutorials/examples there are pretty good.

At the end of the day, it all comes down to your personal learning style. For me the thing that worked was to go through the above mentioned steps and then find a problem I was interested in and try to solve it using my newly found skills. That way you will discover new tools and methods.

Finally, the Deep Learning [2] book is also very good but I would not recommend it to a beginner. It's better to use it when you have a basic understanding of Machine Learning and you want to gain a deeper understanding of the concepts.

[1]:https://www.manning.com/books/deep-learning-with-python

[2]: https://www.deeplearningbook.org/

fredleblanconJuly 17, 2017

* Deep Sea Adventure — A tiny push-your-luck game that blends theme and mechanics perfectly, is easy to explain, and is a ton of fun without being too thinky. It also scales perfectly from 2 to 6 people. Expensive if you're just counting components, but the value of the game and the fun it brings makes it worthwhile.

* Celestia — Also push-your-luck style, but with a bit more production (and, oddly, cheaper than Deep Sea Adventure).

* Patchwork — A great little two-player game where you're making a quilt. That sounds silly, but it's like Tetris that's powering a small economical engine. Really fun.

* For Sale — Good for 3-6 players, a really simple little card game where you get properties in the first round and auction them off in the second. Plays in 15 minutes, fits in a small box. (I think they even make a travel version.)

* Santorini — the retail version is a big production, but if you get it, you could easily make a smaller version of it. Takes 30 seconds to learn and is hard to really master. Comes with a ton of God power cards that make a bunch of replayability.

* Smash Up — the base set comes with 8 factions. Each player takes two factions (of their choice, at random, etc.) and smashed them into a deck. You then compete against others to topple bases and score points. A lot of fun with the right crowd. I think they've released about 50 factions total so far, with 8 new ones released each year currently. All totally optional.

* Star Realms — great little two-player deck builder. It's space themed, if you want another theme, they have other versions that work similarly. It's just one deck of cards, plus expansions if you like it.

* Dixit — I love Dixit. I've never played it with anyone that didn't have fun. The box really spreads things out, and is mostly holding the scoring track, but you could replace that with something smaller easily. A deck of amazing art cards, a couple of voting tokens, and a way to keep score. Could fit into a sandwich bag if you create a smaller scoring system.

cvs268onNov 3, 2017

* Few interesting bits of code:

* Checkout the source at https://github.com/TheCodeArtist/deep-thought-tabs/tree/mast...

to learn creating webextension addons for Firefox in pure JavaScript (no external libs).

NormilleonJuly 28, 2020

“There is no problem,” said Deep Thought with magnificent ringing tones. “I am simply the second greatest computer in the Universe of Space and Time.”

“But the second?” insisted Lunkwill. “Why do you keep saying the second? You’re surely not thinking of the Multicorticoid Perspicutron Titan Muller are you? Or the Pondermatic? Or the . . .”

Contemptuous lights flashed across the computer’s console.

“I spare not a single unit of thought on these cybernetic simpletons!” he boomed. “I speak of none but the computer that is to come after me!”

Fook was losing patience. He pushed his notebook aside and muttered, “I think this is getting needlessly messianic.”

“You know nothing of future time,” pronounced Deep Thought, “and yet in my teeming circuitry I can navigate the infinite delta streams of future probability and see that there must one day come a computer whose merest operational parameters I am not worthy to calculate, but which it will be my fate eventually to design.”

ashkatonJuly 31, 2017

I found the top down approach to very effective in keeping me motivated as I worked my way through the course.

It took me 6 months of watching(and rewatching) the videos and working on problems to get comfortable.

I have done a few MOOCs: Andrew Ng's machine learning, Coursera ML specialisation, edx Analytics Edge and all of them were good learning experience but fast ai's deep learning part 1 really stood out.

For me, the combination of Deep Learning Book + Fast ai MOOC + CS231n (youtube videos & assignments) cover almost everything I want to learn about the subject.

@jph00, I'm half way through neural style transfer and I am loving it.

justifieronNov 19, 2018

sorry bill but one could argue in a round about way that andrej karpathy did do just that ;P

the hot dog identifying app is one of my favourite examples of how spot on the show is

here(o) is a question i asked to one of the show's technical consultants whether the choice of 'not hotdog' was a reference to one of karpathy's early demos(i||ii)

timanglade> Ha seems like a fun coincidence.

(o) https://news.ycombinator.com/item?id=14639161 the yt link is now a dead link.. use either of the below

(i) https://www.youtube.com/watch?v=u6aEYuemt0M&t=465 ; Title: Deep Learning for Computer Vision (Andrej Karpathy, OpenAI) ; Desc: The talks at the Deep Learning School on September 24/25, 2016 were amazing. I clipped out individual talks from the full live streams and provided links to each below in case that's useful for people who want to watch specific talks several times (like I do).

(ii) https://www.youtube.com/watch?v=eyovmAtoUx0&t=5787 ; timestamped from the full stream; Title: Bay Area Deep Learning School Day 1 at CEMEX auditorium, Stanford ; Desc: Day 1 of Bay Area Deep Learning School featuring speakers Hugo Larochelle, Andrej Karpathy, Richard Socher, Sherry Moore, Ruslan Salakhutdinov and Andrew Ng. ;

warabeonFeb 8, 2018

I started Deep Learning Specialization in Coursera: https://www.coursera.org/specializations/deep-learning last month and almost finished it, but I realized this field requires a lot of expertise, not something you can learn in a month. What I learned in the courses was just a basic topics in Deep Learning and how to use Numpy, TensorFlow and Keras.

I was considering diving into a Data Science job and started that specialization as a starting point, but I just realized how foolish I am. Chances are I'll find a job, but it definitely takes another 10 years/10,000 hours to master this discipline.

Anyway, the specialization is wonderful and Dr.Ng explains complicated Deep learning topics in a way that is understandable for everyone. So if just learning is what you want, you should take it, but I don't think you are prepared for a real world Data Science job after finishing it.

michael_nielsenonOct 26, 2013

* The book incorporates lots of running code for readers to explore and extend.

* The book's philosophy is to go deep into the core concepts of deep learning, not to superficially cover a long laundry list of ideas. This gives readers a solid foundation to build on, and makes understanding other material much easier.

* Deep learning is the most powerful approach known to many problems in image recognition, speech recognition, and natural language. The book will help lots more people get quickly up to speed.

The book will be freely available online, and a beta site is coming soon. Pre-beta mailing list here: http://eepurl.com/BYr9L

diegoonApr 1, 2012

Our search back-end team is growing, and we are looking for an experienced manager for it. Job description pasted below.

Email dbasch AND iperisic at linkedin dot com

Responsibilities:

· You will lead an innovative team of software developers to design, build and maintain our search infrastructure.

· You'll lead through uncertainty and collaborate with other engineering teams, Business Development, Legal, and teams across the organization to make things happen to achieve desired results.

Requirements:

· Deep Software Technical Skills: You are deeply technical, understand how to break down problems and design extensible solutions. You have ample development experience in Java (preferably also C++ or other JVM-based languages such as Scala), as well as scripting languages. You know how to scale systems to a billion calls per day, how to parallelize requests, and how to build infrastructure and APIs for softwareservices. You have experience building large-scale information retrieval solutions, and understand search engines inside and out. You are intimately familiar with concepts such as garbage collection algorithms, parsing, lexing, building inverted indexes, ranking algorithms, etc. You have deep knowledge of the fundamental concepts discussed in books such as Modern Information Retrieval or Managing Gigabytes.

· You likely have a BS, MS or PhD in Computer Science or closely related field.

· Management and Cross-Organizational Influence: You have demonstrated successful leadership in building and leading small, high-performance engineering teams. You understand the value of relationships and are highly effective at influencing cross-organizational teams to implement internal component APIs to meet the needs of external consumers. You communicate effectively to all levels inside the company and with partners.

· You're a very hands-on technical manager who can influence and lead the architecture, design and development of a scalable, high performance, and high reliability platform.

igomez88onJan 22, 2021

Ari Bornstein is Head of Developer Advocacy at Grid.ai and PyTorch Lightning. Previously, Ari scaled Microsoft's AI/ML global advocacy efforts as an Open Source Engineer.

Ari's Computer Science Masters Research at Bar-Ilan University concentrated on AI and NLP with an emphasis on multi-document semantic representation, summarization and coreference resolution. He also holds dual degrees in History and Computer Science from Goucher College.

Follow Ari:

@pythiccoder

https://www.linkedin.com/in/aaron-ari-bornstein-22aa7a77/

YeGoblynQueenneonJan 24, 2020

https://waset.org/bayesian-statistics-for-machine-learning-c...

What struck me was a section called "Special Journal Issues":

Special Journal Issues

ICBSML 2020 has teamed up with the Special Journal Issue on Bayesian Statistics for Machine Learning. A number of selected high-impact full text papers will also be considered for the special journal issues. All submitted papers will have the opportunity to be considered for this Special Journal Issue.

Of course there is no such thing as a "Special Journal Issue". There are special issues of particular journals, for example the Special Issue of the Machine Learning Journal on Learning and Reasoning (that I was looking for when I stumbled on this page).

Every single conference on the site has the same text in this section... and in every other section with minor variations.

There are more hints:

1) The names of the conferences seem to have been generated by prepending "International Conference" in front of legit conference names.

2) The "Call for papers" page is just a list of subject fields.

3) The "Selected papers" don't have anything to do with the actual conference subjects and are in fact scraped from other conference and journal sites. For example, in the "ICBSML" page there's a paper titled :

Deep Learning Based Fall Detection Using Simplified Human Posture

But if you click the link you see a pdf with this rubric on top:

4) etc etc.

It's a scam.

abeppuonNov 18, 2015

A while ago there was the automatic statistician [1,2] which can do various statistical analyses and reporting automatically. This year there was a paper out of MIT on Deep Feature Synthesis, in which a largely automated system did quite well on Kaggle problems [3]. Now this, which seems like it could produce solutions to some problems I've used in technical phone screens.

At some point, someone will write a framework which automates the process of finding human cognitive tasks to automate, and someone else will give write a cost function of automated-task-to-business-need-mismatch which is amenable to optimization, and then we can all go home.

[1] http://www.automaticstatistician.com/index/

[2] http://mlg.eng.cam.ac.uk/lloyd/talks/jrl-auto-stat-msr-2014....

[3] https://groups.csail.mit.edu/EVO-DesignOpt/groupWebSite/uplo...

nabla9onJune 27, 2016

Here is good authoritative definition:

"Deep-learning methods are representation-learning methods with multiple levels of representation, obtained by composing simple but non-linear modules that each transform the representation at one level (starting with the raw input) into a representation at a higher, slightly more abstract level." from Deep Learning, Nature Volume 521 issue 7553, 2015, LeCun, Yann; Bengio, Yoshua; Hinton, Geoffrey

History: Multi layer neural networks, convolution networks, recurrent networks etc. are old techniques that have existed for decades. They used to be very slow and using them seemed to be dead end theoretically.Canadian Mafia (Geoffrey Hinton, Yann LeCun and Yoshua Bengio) from University of Montreal worked diligently and solved many theoretical and practical problems and made these algorithms usable in practice. GPGPU:s hastened this process.

brandonbonJan 2, 2017

Deep learning practitioners are aware that they're using non-convex optimization, stochastic gradient descent, and so on. In fact, those topics are core to modern deep learning research and explicitly acknowledged in most published research: LSTMs were invented to solve the vanishing gradient problem, Restricted Boltzmann Machines were used as a pre-training step to avoid local minima, and optimizers like ADAM have explicit guarantees about things like convergence.

You may know all this stuff already--not sure, based on your comment above.

If not, here are a few example papers from mainstream AI researchers explicitly talking about deep learning as an optimization problem or function approximator:

[1] Why Does Unsupervised Pre-training Help Deep Learning?http://www.jmlr.org/papers/volume11/erhan10a/erhan10a.pdf

[2] Multilayer feedforward networks are universal approximators: http://www.sciencedirect.com/science/article/pii/08936080899...

[3] Deep Learning, Nature. http://www.cs.toronto.edu/~hinton/absps/NatureDeepReview.pdf

[4] Adam: A Method for Stochastic Optimization. https://arxiv.org/abs/1412.6980

leblancfgonOct 26, 2016

[1] Deep Visual-Semantic Alignments for Generating Image Descriptions - cs.stanford.edu/people/karpathy/cvpr2015.pdf

[2] Deep Learning for Content-Based Image Retrieval - www.research.larc.smu.edu.sg/mlg/papers/MM14-fp336-hoi.pdf

[3] Deep Learning for Content-Based Image Retrieval - www.cs.rutgers.edu/~elgammal/pub/MTA_2014_Saleh.pdf

aub3bhatonAug 18, 2017

[1] https://github.com/AKSHAYUBHAT/DeepVideoAnalytics

albertzeyeronFeb 16, 2015

neilsharmaonJuly 6, 2014

2. Deep Breathing - I’m trying to meditate, but my mind wanders after a minute or two. Trying to fight off the countless notifications, chrome tabs, noises, etc that have shortened my attention span. Goal is to hit 10 minutes of focusing on nothing.